Convergence is upon us

Quantum computing’s long adolescence was always going to end not with a single breakthrough moment, but with a convergence. Hardware, software, capital, and policy arriving at the same time, each making the others more credible. March 2026 looked a lot like that convergence beginning in earnest.

I am writing this now, in March 2026, as over the last 4-5 weeks, I began to notice a bunch of dots beginning to join. Multiple parts of the quantum stack started referencing each other.

A wiring problem being solved at the hardware level.

A systems architecture being published that bridges the classical-quantum boundary.

A company generating real commercial revenue acquiring a chip foundry to secure its supply chain.

A government putting £2 billion on the table for domestic quantum procurement.

And Google telling the world that the encryption apocalypse is now a 2029 problem, not a 2040s one.

That’s what convergence looks like before it becomes obvious.

The Wiring Problem Has a Fix, Maybe

The hardest unsexy problem in scaling quantum computers has never been qubit count. It’s wiring.

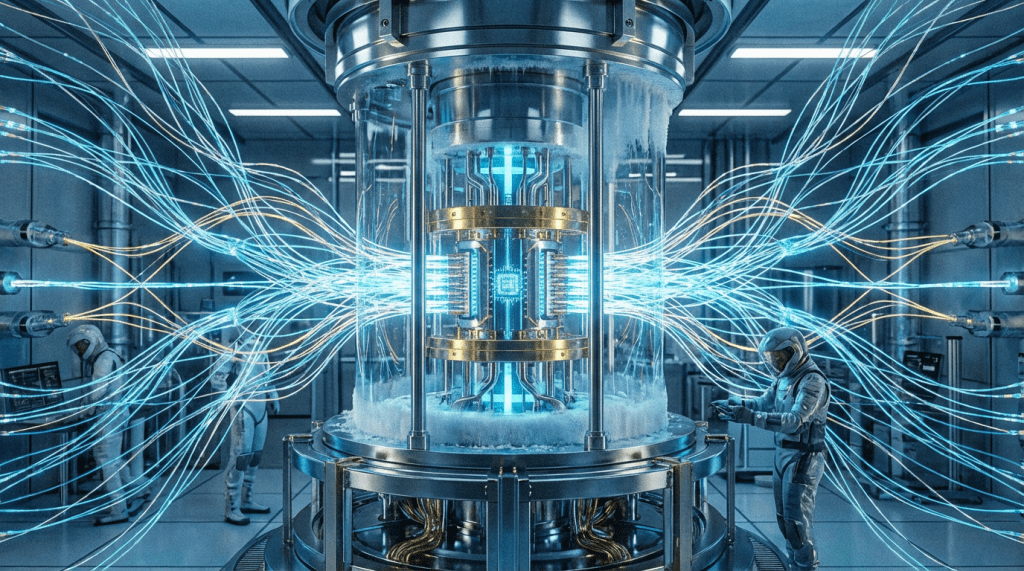

Every qubit in a superconducting system has historically needed its own control line running from room temperature down to millikelvin, roughly -273°C. That constraint alone puts a hard ceiling on system size. You can’t build a 1,000-qubit processor if you need 1,000 cables threading through a dilution refrigerator. That irresistibly cool looking chandelier.

On March 18, SEEQC published a result in Nature Electronics that goes directly at this problem. The conventional design runs all qubit control electronics at room temperature and routes signals down into the cryogenic chamber through individual cables, one per qubit. SEEQC moved the control electronics inside the refrigerator itself, integrating them directly onto the quantum chip alongside the qubits. A five-qubit test system operated with error rates below 0.5%, and with no measurable interference between the control circuitry and the qubits it’s supposed to control. The bigger deal is that this architecture lets multiple qubits share control pathways, breaking the one-cable-per-qubit constraint and making large-scale systems physically plausible in a way they weren’t before.

This is a peer-reviewed, direct attack on the scaling bottleneck that has been quietly throttling the entire superconducting qubit roadmap.

It doesn’t make the engineering easy, but it makes it tractable in a way it wasn’t three weeks ago.

Big Blue Draws the Blueprint

IBM took the systems-level view of the same problem. On March 12th, it released the industry’s first quantum-centric supercomputing reference architecture. The blueprint details how quantum processors integrate with classical GPUs and CPUs across on-premises, research, and cloud environments. This moved the quantum-classical boundary as an engineering interface rather than a fundamental division. The Cleveland Clinic put the blueprint to work the same week, using IBM’s quantum processor to simulate a 303-atom miniprotein, a molecule complex enough that no classical computer can model it efficiently on its own. The approach breaks the molecule into smaller pieces, hands the hardest part to the quantum processor (mapping all the possible ways the molecule’s electrons can arrange themselves), and lets a classical computer take it from there. Not quantum computing in its full promise, but a working system solving a real scientific problem that classical hardware alone couldn’t crack at this scale.

Two stories, same thesis: the classical-quantum boundary is being engineered, not waited for.

A Materials Wildcard

On the materials side, researchers identified NbRe, a niobium-rhenium alloy as a probable triplet superconductor, capable of transmitting both electrical current and electron spin with zero resistance. If confirmed by independent groups (it hasn’t been yet), this addresses one of quantum computing’s most persistent stability challenges at the physical layer.

Early days, and the researchers themselves say so. But it’s the kind of upstream materials discovery that can reshape hardware roadmaps over a five-year horizon.

Capital Is Starting to Sort Winners from Story Stocks

The industry’s capital picture this month split sharply along a line that matters.

IonQ: Real Revenue

IonQ reported $130 million in 2025 GAAP revenue, up 202% year-over-year, beating its own guidance by 20%. Sixty percent came from commercial customers, not governments or research institutions. This is the first time any public quantum company has crossed $100 million in annual revenue, and the commercial mix signals that trapped-ion systems are generating real procurement, not just goodwill.

The Acquisition

Then IonQ moved on the supply chain. The company announced the $1.8 billion acquisition of SkyWater Technology, a US-based semiconductor foundry with facilities in Minnesota, Florida, and Texas. The stated rationale: create the only vertically integrated full-stack quantum platform company. The operational rationale: don’t depend on anyone else for fabrication as you scale to 200,000-qubit systems by 2028.

The IonQ-SkyWater shareholder vote is May 8. If it closes, IonQ will have domestic quantum production hubs and a fabrication backbone that no competitor currently has.

That’s not a bet on quantum computing’s future, it’s a bet on who manufactures it.

The Contrast

Contrast this with Quantum Computing Inc., which reported Q4 2025 revenue of $198,000.

Thousands, not millions, while holding $1.6 billion in total assets following a $750 million private stock placement. Revenue grew 219% year-over-year, which sounds impressive until you note that 219% of almost nothing is still almost nothing. Total 2025 revenues across all four quarters: $682,000. QCI is building a photonic chip fabrication facility (Fab 1) and has real technology ambitions.

But investors valuing the company on its capital raise rather than its commercial traction are taking a fundamentally different bet than IonQ’s, Speculation on optionality, not a claim on a category.

Upstream Bets

Upstream, the supply chain is drawing early-stage capital. Cryogenic electronics startup Rhonexum closed a $1 million pre-seed to miniaturize electronics that function at extreme cold, directly adjacent to the wiring bottleneck SEEQC attacked at the chip level.

The US Department of Energy awarded Infleqtion $3.9 million through ARPA-E, targeting quantum simulation for battery chemistry and catalysis. Near-term commercial energy applications that could generate early evidence of practical quantum utility.

UK’s £2B Commitment

The UK government wrote the largest single check of the month: £2 billion for ProQure, a national programme to procure and build large-scale quantum computers on UK soil by the early 2030s. It’s the first country to make this kind of procurement commitment. Within days, Rigetti announced a £100 million investment in the UK and a plan to deploy a 1,000+ qubit system within four years.

When a government creates a credible procurement market, it changes who shows up and on what timeline.

Q-Day is Nigh-er

On March 25, Google moved its Q-Day estimate to 2029.

Q-Day is the date by when a sufficiently capable quantum computer could break RSA and elliptic curve encryption.

The previous conventional estimate was somewhere in the 2040s. Google’s revised timeline, driven by faster-than-expected progress in error correction and hardware, compresses the migration window to roughly three years for any organization holding sensitive data with multi-year exposure.

The “harvest now, decrypt later” threat is the reason this matters immediately rather than abstractly. Adversaries, state-level and otherwise, are already collecting encrypted data with the intent to decrypt it when hardware matures.

If your data is sensitive in 2029, it’s already a target today.

Google’s warning is not a theoretical upgrade; it’s an operational problem for anyone in finance, national security, pharmaceuticals, or critical infrastructure.

The Policy Window Is Not Symmetric

The policy scaffolding being built around this urgency is extensive. The White House circulated a draft executive order, titled “Ushering In The Next Frontier Of Quantum Innovation,” in February. This would direct OSTP, Commerce, Energy and Defense to deliver an updated National Quantum Strategy within 180 days, launch a federally backed quantum computer for scientific applications at a DOE facility, and build a workforce pipeline through NSF’s new QIST Education Institutes.

It hasn’t been signed. But the institutional machinery it implies is already moving: Representative Feenstra from Iowa introduced a bill on March 6 to expand NSF quantum modeling and simulation programming under the National Quantum Initiative Act, specifically to allow developers to build applications before fault-tolerant hardware fully arrives.

The strategic read here is straightforward: countries that establish domestic fabrication capacity, procurement pipelines, and PQC migration mandates in the next 24 months will not be buying quantum capability from others in the 2030s. The window is not symmetric.

Early movers are building infrastructure; late movers will be customers.

What I Will Be Watching Next

Three signals worth tracking over next few weeks:

The White House EO. Whether it gets signed, and in what form, will signal how seriously the current administration treats quantum as a strategic priority distinct from AI. The draft is serious. The signature is not guaranteed.

IonQ-SkyWater shareholder vote (May 8). If it passes, watch how fast IonQ moves to operationalize fabrication. The speed of integration will be an early indicator of whether this is a supply chain play or a financial engineering move.

NIST and post-quantum cryptography. Google’s 2029 Q-Day warning should accelerate migration mandate timelines. If NIST or sector regulators respond with tighter deadlines, it creates immediate commercial demand for PQC implementation, a category that is under appreciated relative to hardware in most quantum coverage.

Disclosure and Disclaimer:

QC sits at the intersection of frontier technology, engineering, physics, investments, geopolitics, and policy. All things that I love geeking out about. This is my honest attempt at a macro analysis of the quantum computing industry. I hold long positions in some of the companies covered in this analysis. If you found this useful, share it with someone who should be paying attention. But do not treat this as investment advice and always make your own assessment before making investment decisions.

Leave a comment